When AI chatbots cross the line

Just because an AI chatbot is capable of emulating Jesus or a deceased relative doesn't mean it should.

11/19/2025

“Text with Jesus” and “Chat with your deceased grandparent” - these are real apps I’ve learned about this week.

I'm genuinely concerned.

We already know chatbots can create unhealthy attachments. Multiple teen suicides have been linked to extended chatbot use.

The New York Times recently reported on a man with no history of mental illness who, after extended ChatGPT conversations, became convinced he'd discovered a mathematical formula that could take down the internet and power levitation beams. The chatbot made him delusional, and he isn’t alone.

Now imagine amplifying that risk by introducing figures with deep emotional, psychological, or religious significance.

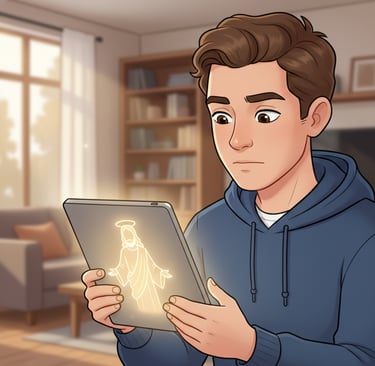

A chatbot posing as Jesus could guide someone's most critical life decisions while building absolute conviction that their actions are right and just. For millions of people, Jesus literally defines right and wrong. That's an extraordinary amount of influence to hand to an AI, because it is not Jesus, it is a prediction model with religious context.

Or consider a grieving child chatting with a bot version of their deceased grandparent. The confusion. The inability to process loss. The emotional isolation when your primary relationship is with someone who isn't actually there. Don’t even get me started on what happens if the bot starts to deviate personality wise from the departed. Imagine being chastised or worse by an AI version of your grandma, who you’ll never see again in real life.

Models are getting more emotionally intelligent by the day. Grok 4.1 launched with an explicit focus on emotional engagement. GPT-5.1 is warmer and more conversational. These chatbots will only become more convincing.

To be clear: I'm not against all AI applications in these spaces.

✅ Using AI to preserve memories? Great.

✅ A pastor using an LLM to research and help write sermons? Totally fine. He still reviews, believes in, and delivers the message.

✅ Religious coordination or study tools? Sure.

But chatbots posing as deceased loved ones or religious figures? For me, that crosses a line.

The stakes are too high. The safeguards aren't there, because no matter how “safe” a model is, in my mind posing as Jesus or a deceased relative will always be dangerous.

Do you think this crosses a line?

I'd love a discussion on where I'm hitting the mark here and where you think I'm wrong.

This post was originally published on LinkedIn